Introduction

Feature engineering is a crucial process in any machine learning exercise. The objective of this process is to convert raw data into meaningful features that can enhance model performance. This process can determine the success or failure of a machine learning model, making it essential for data professionals to master.

A well-structured Data Science Course in mumbai will always emphasize the importance of feature engineering, which bridges the gap between raw data and machine learning algorithms. Below is a comprehensive guide on how to implement feature engineering effectively.

Understanding Feature Engineering

Feature engineering is the process of using domain knowledge to create new input variables or features from existing ones to increase the predictive power of machine learning models. It often involves:

- Cleaning data

- Handling missing values

- Encoding categorical variables

- Normalizing numerical features

- Creating interaction terms

- Extracting date or text features

These tasks help structure the data to allow models to learn patterns more effectively.

Data Collection and Preprocessing

Before implementing feature engineering, you need to collect and prepare your data:

- Data Cleaning: Remove duplicates, correct errors, and ensure consistency in formats.

- Handling Missing Data: Choose appropriate imputation strategies such as mean/mode imputation, forward/backward fill, or predictive imputation.

- Outlier Detection: Use techniques such as Z-score, IQR, or isolation forests to detect and handle anomalies.

Preprocessing is foundational because feature engineering builds on this clean and structured dataset.

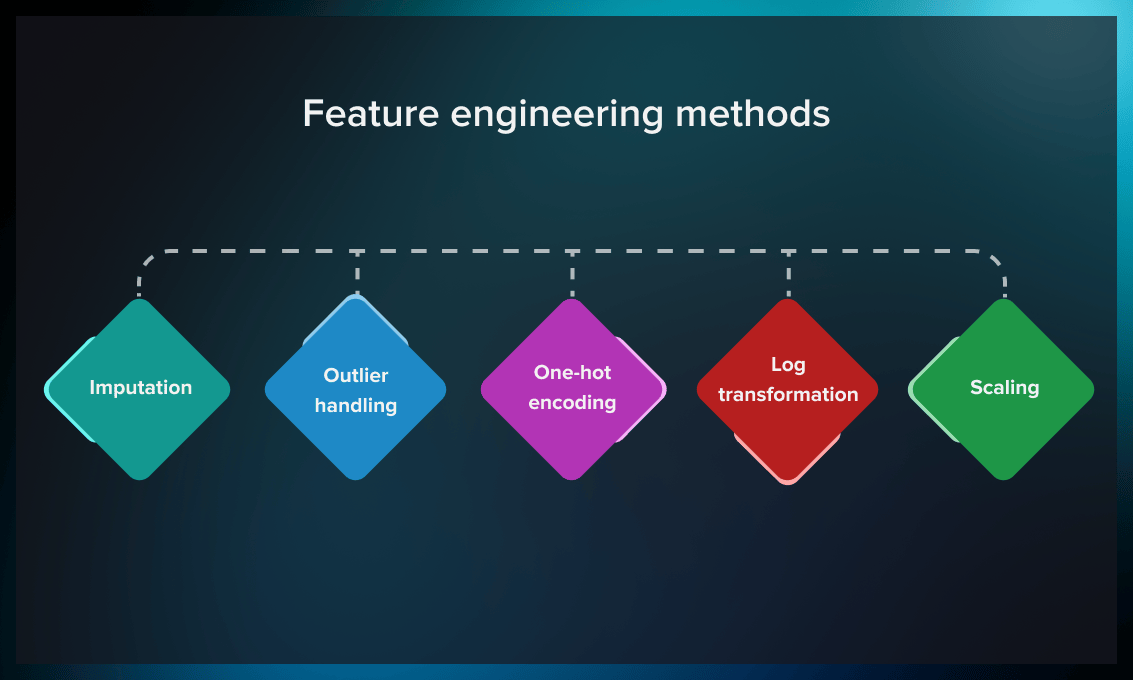

Types of Feature Engineering Techniques

Let us break down key feature engineering techniques commonly used in practice:

Encoding Categorical Variables

Categorical variables need to be repurposed into their numerical equivalents. Methods include:

- Label Encoding: Assigns each unique category an integer value.

- One-Hot Encoding: Creates binary columns for each category.

- Target Encoding: Replaces categories with the average of the target variable for that category.

The most suitable encoding technique is governed by the cardinality of the feature and the model type.

Scaling Numerical Features

Numerical features need to scale because models perform better when features are on a similar scale. Common techniques include:

- Standardization: Subtract the mean and divide by standard deviation.

- Min-Max Scaling: Scales data between 0 and 1.

- Robust Scaling: Uses median and IQR, less sensitive to outliers.

This step is especially important for distance-based algorithms like KNN or SVM.

Binning

Transform continuous variables into categorical bins:

- Equal-width binning: All bins have the same width.

- Equal-frequency binning: All bins contain the same number of observations.

Binning can capture non-linear relationships and make models more interpretable.

Feature Extraction

Deriving new features from existing data can uncover hidden patterns:

- Date Features: From a timestamp, you can extract day, month, year, weekday, etc.

- Text Features: Use techniques like TF-IDF, Bag of Words, or word embeddings to convert text into numeric form.

- Image Features: Use convolutional layers in deep learning or pre-trained models to extract features from images.

In most applications of natural language processing and computer vision, advanced feature extraction techniques are increasingly being integrated.

Interaction Features

Create new features by combining existing ones:

- Polynomial Features: Interaction terms between variables.

- Ratios and Differences: For example, income-to-loan ratio or age difference between individuals.

These help capture complex relationships that linear models might miss.

Feature Selection

Feature engineering also involves feature selection, which improves model performance by reducing noise and overfitting.

Techniques include:

- Filter Methods: Use statistical techniques like correlation coefficients, chi-square, or mutual information.

- Wrapper Methods: Based on model performance used as a criterion; for example, Recursive Feature Elimination (RFE).

- Embedded Methods: Techniques integrated with model training like LASSO, Ridge, or Tree-based importance scores.

Feature selection ensures the model is not overwhelmed with redundant or irrelevant features, leading to better generalization.

Automation with Feature Engineering Tools

Several libraries and tools can help automate the feature engineering process:

- Featuretools: Automates feature engineering for relational datasets.

- tsfresh: Specialized in time-series feature extraction.

- Scikit-learn Pipelines: Allows chaining of preprocessing and model training steps.

- AutoML Tools: Libraries like H2O.ai and Auto-sklearn perform end-to-end feature engineering and model selection.

However, automation should complement—not replace—domain expertise. A skilled practitioner, or one with extensive experience, will know how to balance automation with hands-on insight.

Feature Engineering for Different Data Types

- a) Numerical Data

- Log transformations to reduce skewness

- Polynomial and spline features to capture non-linearity

- Lag and rolling features in time series

- b) Categorical Data

- Hierarchical grouping based on business logic

- Frequency encoding

- Cross features (for example, combining “Region” and “Product” into one)

- c) Time Series Data

- Lag features

- Rolling averages and exponential moving averages

- Seasonal decomposition features

- d) Text Data

- Sentiment scores

- N-grams and syntactic dependencies

- Named entity recognition (NER) outputs

The diversity of approaches shows why hands-on practice is invaluable for mastering these methods.

Model Evaluation and Feedback Loop

After feature engineering and model training, evaluate performance using appropriate metrics:

- Classification: Accuracy, precision, recall, F1-score, ROC-AUC

- Regression: RMSE, MAE, R²

- Cross-validation: Use k-fold CV to ensure the model generalizes well

Based on the evaluation, refine your feature set:

- Drop redundant features

- Create new features from errors

- Revisit earlier assumptions

Best Practices for Feature Engineering

- Understand the domain: Leverage domain knowledge to inform feature creation.

- Visualize your features: Use plots like histograms, box plots, and pair plots to understand distributions and relationships.

- Iterate frequently: Feature engineering is not a one-time step. Iterate as you learn more from your model’s behaviour.

- Document your transformations: Maintain reproducibility and clarity by documenting every step.

- Avoid data leakage: Ensure no future information leaks into the training data during feature creation.

As any professional data scientist would vouch, these practices are essential for building trustworthy and scalable ML systems.

Conclusion

Feature engineering is both an art and a science. It requires creativity, domain knowledge, and technical skill. Whether you are working on a simple linear regression or a complex deep learning pipeline, engineered features often make the biggest difference in model performance.

By mastering techniques such as encoding, scaling, interaction features, and feature selection, data scientists can significantly boost the predictive power of their models. Enrolling in a well-structured Data Scientist Course is one of the most effective ways to gain hands-on experience and develop the intuition needed for effective feature engineering.

With the right mindset and tools, feature engineering can transform raw data into powerful insights—and set your machine learning projects up for success.

Business Name: ExcelR- Data Science, Data Analytics, Business Analyst Course Training Mumbai

Address: Unit no. 302, 03rd Floor, Ashok Premises, Old Nagardas Rd, Nicolas Wadi Rd, Mogra Village, Gundavali Gaothan, Andheri E, Mumbai, Maharashtra 400069, Phone: 09108238354, Email: enquiry@excelr.com.